Blog

Here’s more Sensors Converge 2026 scoop from ST and Microchip

More exhibitors at Sensors Converge 2026 have shared what they will be showcasing at the event next week, May 5-7, at Santa Clara Convention Center. That means more stops to tour!

The following exhibitors expand on the group covered in a previous report — TDK Invensense, Murata, Ceramtec, NGK Insulators, Rivian, Toborlife AI and Melexis.

STMicroelectronics (Booth #1036)

Tony Alegria, product marketing engineer, is launching on May 6 at 2:55 pm in the Live Theater an all-in-one 3D ToF lidar module with 2.3k zones for use in robotics, drones, AR/VR and other applications. Also, he will describe the new 5MP RBG-NIR CMOS Image sensor for low-power Edge AI.

On May 6, ST will join a Panel discussion on Edge AI sponsored by the Edge AI Foundation.

Other sessions are described on the same ST web page, as well as a range of technologies at ST’s booth, everything from ST tech for Amazon Sidewalk to a MEMS inertial sensor for use in contextual aware PCs.

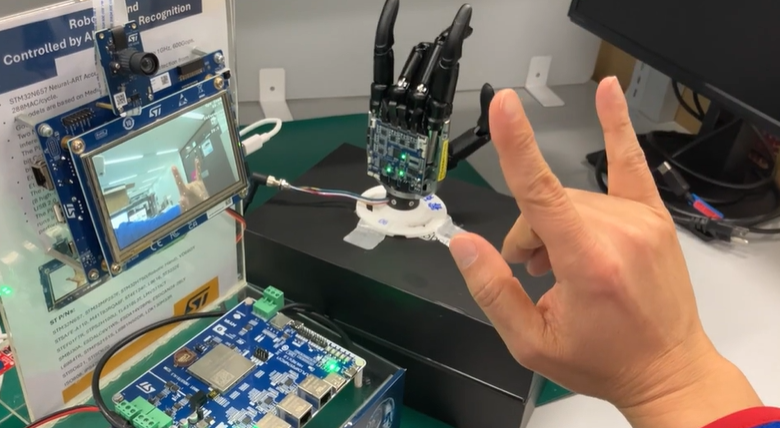

A real-time 3D hand tracking application uses a BionIT lab hand, embedding ST sensors and the Holoscan Sensors Bridge. ST has a video showing the action.

NFC wireless charging sensors, a wireless AI tracking cameras and a smart cook top interface will also be shown.

An ST-Nvidia humanoid robotic proof of concept combines ST vision, motion and motor control sensors with the Nvidia jetson platform and Holoscan Sensor Bridge to demonstrate 3D object tracking and head-joint control.

A 3-hour introductory workshop will be held at 10 a.m. May 7 for engineers wanting to prototype sensor-based apps using the ST High-G IMU. (Separate registration required, and space is limited.To sign up, visit registration and select the workshop in the Add-On section.)

Microchip (Booth #922)

More than a dozen demos are expected at the Microchip booth, including an Edge AI keyword spotting solution for always-listening voice activation on a low-power microcontroller. It is optimized for embedded systems that need long battery life and fast response times.

Machine learning inference in the E-Gate demo shows contactless access control using facial recognition. It is performed locally on the SAMA7D65, a single-core Arm Cortex A7 microprocessor.

ST’s Brad Poole is discussing why production- ready Edge AI is harder than developing a demo at 11 a.m. Wednesday May 6. And, ST’s Swapna Gurumani is presenting on edge-based keyword spotting at 2:20 on May 6.