Blog

Meta Unveils Four MTIA Chips Focused on High-Perfomance Inference

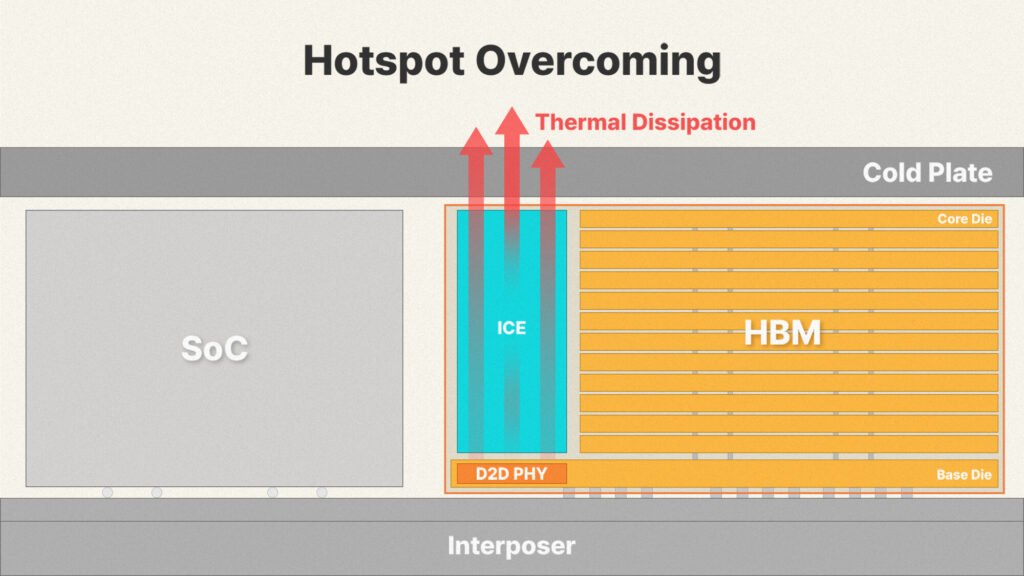

The MTIA chips also include hardware support for attention primitives and mixture-of-experts layers, along with low-precision formats tailored to inference to reduce conversion overhead. Software compatibility was a stated priority. Meta says the stack runs natively on common frameworks, so existing production models can be deployed on both GPUs and MTIA without major rewrites, which should ease adoption. Multiple MTIA generations are built to share the same chassis, rack, and networking, allowing upgrades by swapping modules rather than refitting data center infrastructure. That modularity helps explain Meta’s fast release cadence compared with the industry norm, considering that Meta’s data centers span millions of chips. MTIA chips are already running at kilowatt power budgets and PetaFLOPS of compute, so MTIA accelerators are also competing with industry-leading solutions from NVIDIA, AMD, and other hyperscalers.

Hyperscalers are known to explore developing in-house ASIC solutions that are comparable to NVIDIA and AMD GPUs in some areas. However, when a hyperscaler like Meta has a specific kind of workload that could greatly benefit from customized silicon, it is worth running an entire design and development of the MTIA lineups. Since Meta already uses NVIDIA and AMD GPUs for training and inference, these MTIA accelerators will help bring the balance to its inference side, as models get trained once, while inference runs for much longer. Additionally, it is worth pointing out that Meta is known for opening its rack designs within the Open Compute Project group, meaning that some of the design elements we see with these ASICs and their racks could end up in other server applications as well.