Blog

AMD Instinct MI355X GPUs Surpass 1M Tokens/Sec in MLPerf 6.0

Just as important, we showed that these results are not isolated. A broad partner ecosystem submitted across four AMD Instinct GPU types that closely reproduced numbers submitted by AMD and the first three-GPU heterogeneous MLPerf submission demonstrated that AMD hardware and AMD ROCm software can orchestrate meaningful inference throughput even across systems in different geographies.

AMD Instinct MI355X GPUs: Designed for Inference from the Ground Up

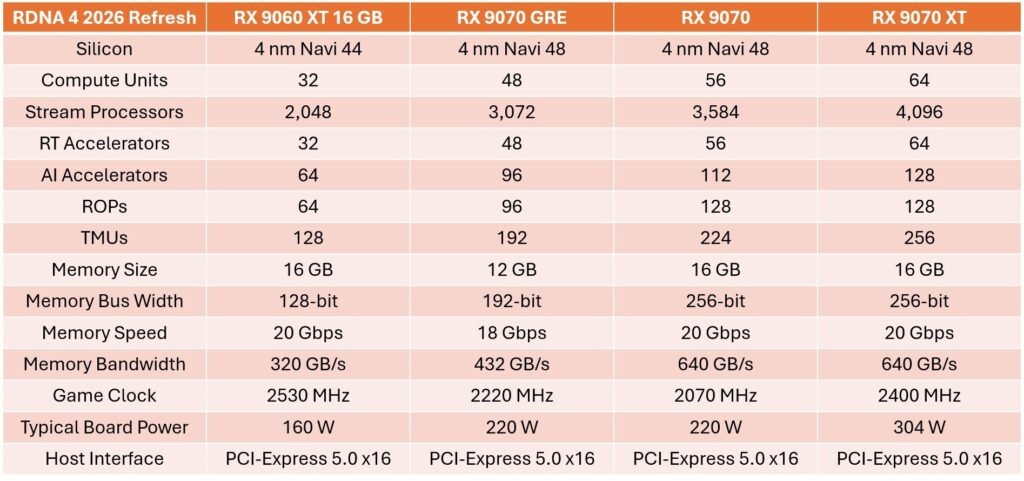

AMD Instinct MI355X GPUs are built on AMD CDNA 4 architecture with a 3 nm process, bring 185 billion transistors, add FP4 and FP6 support, and pair all of that with up to 288 GB of HBM3E memory.

With up to 10 petaflops of FP4 and FP6 performance, support for models up to 520 billion parameters on a single GPU and an industry-standard UBB8 node available in both air-cooled and direct liquid-cooled configurations, AMD Instinct MI355X GPUs are built to deliver more than speed. They also are designed to deliver large-model capacity and deployment readiness in one platform.

Defining Moments from the AMD MLPerf Inference 6.0 Submission

MLPerf Inference 6.0 results from AMD go well beyond a single proof point, revealing meaningful progress across performance, model coverage, scale and reproducibility. Several breakthroughs stand out:

1. AMD Breaks the 1M Tokens/Sec Barrier in MLPerf Inference

One of the biggest milestones in this round is that for the first time, AMD surpassed 1 million tokens per second in the MLPerf Inference benchmark. AMD crossed that threshold on Llama 2 70B in both Server and Offline benchmarks, and on GPT-OSS-120B in Offline – all at multinode scale on AMD Instinct MI355X GPUs.

The industry increasingly evaluates inference at cluster scale, where aggregate throughput and time-to-serve determine whether infrastructure is ready for deployment. Surpassing 1 million tokens per second demonstrates production-class inference throughput.

For customers, this milestone has clear benefits:

- Higher aggregate throughput for serving large user populations and larger models.

- A clear proof point that AMD Instinct MI355X GPUs can sustain performance when deployments move beyond a single box.

- Validation that first-time workloads like GPT-OSS can be enabled quickly and still scale to meaningful production output.

- A stronger foundation for the next generation of multinode and rack-scale inference deployments.

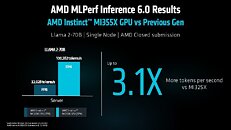

2. AMD Instinct MI355X GPUs Deliver a Clear Generational Leap vs. Previous Gen

AMD also demonstrated a major generational uplift on Llama 2 70B Server. The AMD Instinct MI355X GPU delivered 100,282 tokens per second, displaying 3.1x more throughput than the previously submitted AMD Instinct MI325X GPU results.

One of the biggest milestones in this round is that for the first time, AMD surpassed 1 million tokens per second in the MLPerf Inference benchmark. AMD crossed that threshold on Llama 2 70B in both Server and Offline benchmarks, and on GPT-OSS-120B in Offline – all at multinode scale on AMD Instinct MI355X GPUs.

The industry increasingly evaluates inference at cluster scale, where aggregate throughput and time-to-serve determine whether infrastructure is ready for deployment. Surpassing 1 million tokens per second demonstrates production-class inference throughput.

That is a meaningful jump in six months, and it reflects the power of the full stack: AMD CDNA 4 architecture, high compute density, support for FP4 and FP6, large HBM3E capacity and AMD ROCm software optimizations tuned for modern large language model inference.

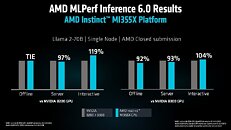

3. Llama 2 70B Shows Broad Single-Node Competitiveness

On Llama 2 70B, the most recognized large language model benchmark in MLPerf, the AMD Instinct MI355X Platform delivered highly competitive single-node results against both NVIDIA B200 and B300 GPUs. Against B200, the AMD Instinct MI355X platform tied in Offline, delivered 97% of Server performance and reached 119% of Interactive benchmark performance. Against B300 single-node, the AMD Instinct MI355X platform reached 93% in Server, 92% in Offline and 104% in Interactive.

Especially important is the breadth of the results. This is not a one-scenario story. AMD shows competitiveness across batch throughput in Offline, sustained throughput in Server and responsiveness in Interactive.

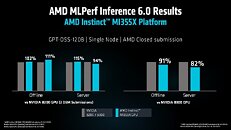

4. GPT-OSS-120B Demonstrates Fast First-Time Model Bring-Up

GPT-OSS-120B is among the most exciting parts of this Inference 6.0 submission because it was a workload run in MLPerf for the first time. First-time model enablement is difficult – the model must be brought up, optimized, validated for accuracy and pushed to competitive performance inside MLPerf timing.

Even with that complexity, the AMD Instinct MI355X platform delivered 111% of B200 Offline performance and 115% of NVIDIA B200 Server single-node performance. Against NVIDIA B300 single-node, the AMD Instinct MI355X platform reached a competitive 91% in Offline and 82% in Server.

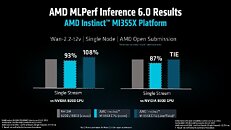

5. Wan-2.2-t2v Extends AMD into All-New Text-to-Video Inference

MLPerf Inference 6.0 also let AMD expand beyond large language models (LLMs) into text-to-video generation with a first-time submission on Wan-2.2-t2v. This benchmark has two tests: Offline and Single Stream. For this submission we focused our effort on the Single Stream scenario and as a result our submission is in the Open category rather than Closed (which requires both Offline and Single Stream). However, our Single Stream run did satisfy the Closed submission rules and thus can be directly compared to scores in Closed division.

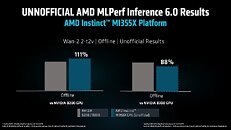

Even so, the result is impressive for a first-time AMD effort on a brand-new workload category: The AMD Instinct MI355X platform achieved 93% of NVIDIA B200 single-node performance and 87% of NVIDIA B300 single-node performance in Single Stream. After the deadline, additional tuning moved Single Stream to 108% of B200 and parity with B300, while unofficial Offline results reached 111% of B200 and 88% of B300. Post-deadline numbers were not part of the official MLPerf submission and were not verified by MLCommons, but they clearly show how quickly performance improved once we had more time to tune.

For customers, the importance of this result goes beyond the percentages themselves. It shows that AMD is expanding model coverage from LLMs into newer multimodal and generative video workloads and doing so with competitive day-one performance.

That matters because generative AI is not standing still. The models customers want to deploy are becoming broader, more multimodal, more specialized and AMD is showing it can keep pace.

6. Multinode Inference Shows Efficient Scale-Out

Interest in multinode inference is rising as models get larger, deployments become more demanding and the industry lays the groundwork for rack-scale systems such as the AMD Helios solution. Our MLPerf Inference 6.0 submission shows that the AMD Instinct MI355X is ready for that transition.

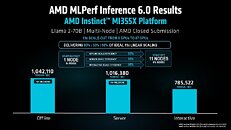

On Llama 2 70B, we scaled from one node to 11 nodes and stayed remarkably close to ideal linear scaling.

At 11 nodes and 87 AMD Instinct MI355X GPUs, we delivered 1,042,110 tokens per second in Offline, 1,016,380 tokens per second in Server and 785,522 tokens per second in Interactive. Scale-out efficiency reached 93% in Offline, 93% in Server and 98% in Interactive. Offline scale-out is the more standard path, but Server and Interactive are harder because they must maintain latency requirements as the cluster grows, which makes these results especially compelling.

This is exactly the kind of stepping stone we need as inference moves toward AMD Helios and future rack-scale deployments.

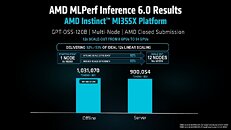

Multinode results continue on GPT-OSS-120B. These results become even more interesting since this was our first GPT-OSS multinode submission. The question was not just whether we could enable the model, but whether we could scale it efficiently across a real cluster. At 12 nodes and 94 AMD Instinct MI355X GPUs, we delivered 1,031,070 tokens per second in Offline and 900,054 tokens per second in Server. Just as important, we stayed close to ideal 12x scaling, with 92% efficiency in Offline and 93% in Server. That made GPT-OSS the second model on which we crossed the 1-million-tokens-per-second mark at multinode scale.

For customers, these scale-out results provide important proof points:

- Predictable multinode scaling grows inference clusters without losing efficiency as workloads and model sizes increase.

- Strong server-scale efficiency builds confidence for real-time inference, not just high-throughput batch processing.

- Higher scale-out efficiency improves GPU utilization, helping lower cost per token and maximizing infrastructure investment.

- Proven multinode performance gives customers a stronger path from pilot deployments to production-scale AI infrastructure

Ecosystem Scale and Reproducibility Across Partner Submissions

Another major highlight for AMD in the MLPerf Inference 6.0 submission is ecosystem momentum. This round resulted in a tie for most partners submitting on AMD Instinct hardware with nine: Cisco, Dell, Giga Computing, HPE, MangoBoost, MiTAC, Oracle, Supermicro and Red Hat.

Those submissions spanned four AMD Instinct GPU types: MI300X, MI325X, MI350X and MI355X. It shows that the ecosystem is not limited to one flagship configuration; it covers multiple generations and multiple deployment models across OEM, ODM and cloud-style platforms.

This reproducibility is especially powerful. On AMD Instinct MI355X GPUs, partner results landed within 4% of submission made by AMD, and some landed within 1%, even on workloads run for the first time. That is a very strong signal that these numbers are not fragile lab artifacts; they are reproducible across real partner systems thanks to predictable AMD hardware and AMD ROCm software.

For customers, that means more than choice. It means confidence that the performance AMD demonstrates can be re-created across the broader ecosystem, reducing deployment risk and accelerating time to production.

First 3-GPU Heterogeneous Submission Demonstrates Flexible Inference Across Geographies

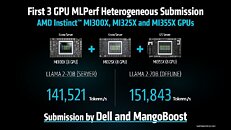

One of the most forward-looking results is the first MLPerf heterogeneous submission built across three AMD Instinct GPU types: MI300X, MI325X and MI355X. Submitted by Dell and MangoBoost, the configuration reached 141,521 tokens per second on Llama 2 70B Server and 151,843 tokens per second on Llama 2 70B Offline.

An especially important detail is geography. The AMD Instinct MI355X platform was located in Dell’s lab in the United States, while the Instinct MI300X and MI325X platforms were in Korea. That makes this more than a mixed-generation inference story, it is also a proof point for orchestration across systems in different geographies.

For customers, the value is obvious:

- Existing AMD Instinct GPU deployments can be extended rather than ripped out and replaced.

- Mixed-generation hardware can be orchestrated intelligently to preserve utilization and throughput.

- AMD ROCm software helps make non-uniform environments more practical, predictable and cost-effective.

- AMD is demonstrating a path toward flexible, future-ready infrastructure instead of one-shot refresh cycles.

How AMD ROCm Software Drives Performance, Scale and Model Enablement

Every major result in the AMD MLPerf Inference 6.0 submission is connected by one common thread: AMD ROCm software. It turned AMD Instinct MI355X hardware into a deployable platform for competitive single-GPU inference, cluster-scale throughput, heterogeneous orchestration and first-time model bring-up.

In the submission, AMD ROCm software helped enable efficient FP4 execution, optimized GPU-to-GPU communication for multinode scaling, dynamic workload distribution for heterogeneous inference and day-zero model readiness for models like Llama, Wan and GPT-OSS.

For customers, AMD ROCm software delivers several practical advantages:

- Optimized model performance for modern generative AI data types and inference kernels.

- Seamless multinode scaling with efficient communication and orchestration.

- Heterogeneous workload distribution across AMD Instinct GPU generations.

- Faster readiness for emerging models and new workload categories.

That is why ROCm software is such an important part of the AMD story. It is not merely helping AMD post one strong benchmark result, it enables performance, scalability, flexibility and reproducibility across the entire Instinct portfolio.

Annual Cadence Is Building Toward AMD Instinct MI400 Series and Helios Rack-Scale Solutions

The broader context behind these results is momentum. AMD is executing on an annual cadence for Instinct GPUs – and that consistency matters. AMD Instinct MI300X GPUs established a strong generative AI foothold in 2023. AMD instinct MI325X GPUs extended that foundation in 2024 with higher compute and HBM3E. In 2025, the AMD instinct MI350 Series, including the MI355X GPU, pushed the platform forward again with new AI data types, larger-model capacity and the inference gains shown throughout this submission.

And in 2026, AMD plans to advance to the AMD Instinct MI400 Series GPUs based on next-generation AMD CDNA 5 architecture, extending this annual cadence into the next era of rack-scale AI and laying the foundation for the AMD Helios rack-scale solution. For customers, that provides confidence that AMD is not simply delivering a strong result today but building a long-term inference platform that can scale with model size, workload diversity and production deployment requirements.

Final Takeaway

The AMD MLPerf Inference 6.0 submission marks a major step forward for AMD and its generative AI story. Across all-new workloads, AMD Instinct MI355X GPUs delivered highly competitive single-node results, incredibly efficient multinode scale-out performance, first-time model bring-up on GPT-OSS-120B and Wan-2.2-t2v, and a milestone of delivering more than 1 million tokens per second at cluster scale.

At the center of all of this is AMD ROCm software, and a disciplined annual roadmap cadence. From the AMD Instinct MI300X GPU to the MI325X GPU to the MI350 Series featuring the AMD Instinct MI355X GPU, AMD is moving quickly, expanding model support, and building the software and systems foundation needed for future rack-scale AI deployments. With the AMD Helios rack-scale solution powered by the AMD Instinct MI400 Series and future AMD Instinct generations on the horizon, MLPerf Inference 6.0 reinforces a clear message: AMD is not just participating in the generative AI inference transition, it is helping define what production-ready GenAI infrastructure looks like.

For readers interested in the deeper technical story behind these results, two new AMD ROCm blogs provide additional detail on the MLPerf Inference v6.0 submission. “AMD Instinct GPUs MLPerf Inference v6.0 Submission” explores the hardware and software work behind these results, while “Reproducing the AMD MLPerf Inference v6.0 submission result” walks through how to replicate the benchmarks on AMD Instinct hardware, reinforcing AMD’s commitment to openness, transparency and reproducibility in AI inference.