Blog

TDK’s SensorGPT Shortens the Edge AI Training Cycle

The primary bottleneck in the deployment of artificial intelligence at the edge remains the acquisition and curation of high-fidelity sensor data. Traditional supervised learning models require massive datasets that are historically gathered through manual collection, cleaning, and labeling — a process that often accounts for the vast majority of a project’s development timeline.

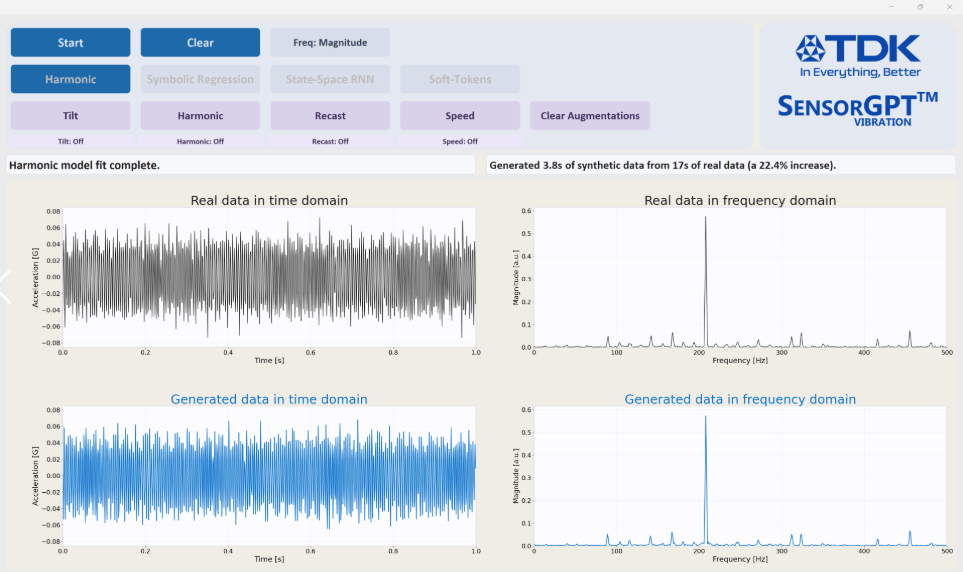

TDK’s recent introduction of SensorGPT addresses this inefficiency by utilizing generative AI techniques to synthesize sensor telemetry, effectively decoupling model training from the constraints of physical data logging.

The company’s framework employs a multi-modal approach to data generation, integrating physics-based simulations with statistical signal processing. Unlike standard data augmentation techniques that rely on basic noise injection, SensorGPT generates datasets that maintain the physical integrity of the transducer’s output.

As a result, engineers can simulate complex environmental variables, such as thermal drift, mechanical vibration, and signal attenuation, which are often difficult to capture consistently in a laboratory environment. By achieving a claimed 90% similarity between synthetic and real-world data, SensorGPT enables the development of robust inference models with significantly less reliance on field-tested samples.

Putting the Horse Before the Cart

From an architectural perspective, the platform facilitates “assisted annotation,” automating the labeling process for large-scale datasets. This is particularly relevant for ultra-low-power edge devices where model optimization is critical.

By training on high-fidelity synthetic data, developers can account for rare “edge case” failure modes that would otherwise take months to observe in a real-world setting. Consequently, the development cycle for a typical edge AI application is projected to drop from a five-month average to approximately three weeks.

The emergence of “physical AI” requires a bridge between digital logic and the chaotic variables of the analog world. SensorGPT serves as this bridge by creating a feedback loop: Synthetic data trains the initial model, and the limited real-world data subsequently gathered is used to refine the generative parameters.

The shift toward synthetic-first development represents a fundamental change in how high-reliability sensor systems are designed. It ultimately moves the industry toward a more agile, simulation-heavy methodology that prioritizes mathematical modeling over brute-force data collection.

Hear more about SensorGPT applications and capabilities, as well as its limitations and availability. Electronic Design Technology Editor Andy Turudic has an impromptu discussion with Abbas Ataya, Sr. Director of AI Systems & Software at TDK USA, in this edition of the Inside Electronics podcast.