Blog

What Are the Advantages, Costs, and Perils of Vibe Coding?

Advantages of Vibe Coding

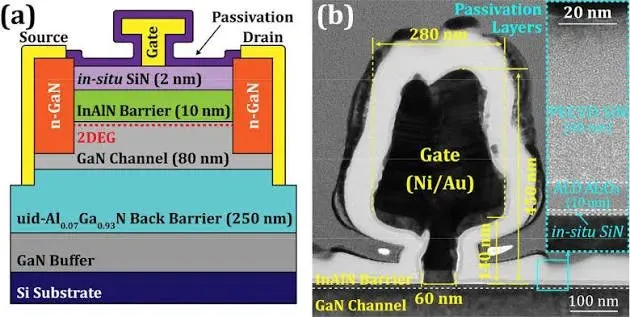

Writing programs and using tools to get programs to run on hardware have been improving, but they still tend to be complex with lots of options. Take a look at the list of command line options for compilers and linkers and then try to figure out the implications of using each one. Likewise, configuring an integrated development environment (IDE) to handle complex builds is another area in which dealing with dependencies makes the job a challenge. Moving into the hardware realm brings its own set of issues.

Of course, many assume vibe coding is just about generating source code and thinking that you’re done once you have something that runs. Chatbots can generate complete programs based on prompts and the amount of source code can be massive, making it difficult for a person to verify its validity.

There’s also the issue of run-once code. If the generated code works once and provides suitable results, then it can be discarded. Code that will be used in production over many years is another matter.

Large-language-model (LLM) chatbots such as Emerson’s NI Nigel and LabVIEW’s AI assistant focus on a more limited environment, in this case the LabVIEW application development. These have a number of advantages, including training from a known-good source rather than the mixture of information found on the internet.

Costs of Vibe Coding

Many developers are using free chatbot versions on the internet, e.g., ChatGPT, Claude, and others, but most have paid versions as well. The big question is availability and how much performance and capability the free versions will retain or gain over time versus the paid versions.

The massive build-up in AI data centers powers both, but money is the end goal for investors in these data centers. Moreover, paid use of vibe-coding functionality needs to balanced with the results as compared to not using them or having a real person perform that function.

Complete open-source implementations are one possibility, including running LLMs locally, but the practicality and performance of local systems isn’t always easy to quantify. I’ve tried some local versions using one high-end NVIDIA GPGPU. However, the results were slower and less functional than the free online versions. This wasn’t a detailed comparison or performance check. It’s also something that even a small company could exceed when using a server with multiple GPGPUs or AI inference engines.

I’ve found chatbots to be useful but have also wasted a good bit of time going down a rabbit hole. This can happen for a variety of reasons, from a chatbot trying to provide a solution that isn’t possible for it to the use of terminology that’s incompatible with the problem.

Finally, the issue of technical debt pushes costs into the future while retaining code that may have significant issues, which may or may not affect an application’s current operation.

Perils of Vibe Coding

We’ve already talked about technical debt, although it’s just one of the potential perils of using vibe coding. Chatbots are also prone to hacking and poisoning that would cause problems similar to computer viruses and conventional hacking.

Programmers will tend to use vibe coding for specific tasks and incorporate the code into an application that’s then run through a compiler. Things are a bit different if a prompt will be used repeatedly over time with different data or prompt variations. This means the underlying chatbot may be changing as well, making the results different. It could be better due to LLM improvements, but the results may not always be better or usable. Determining why this occurs may require more AI intervention.

One way to avoid some of these issues is to employ different agents and prompts to handle tasks like building and running test cases. Another chatbot may be used to develop documentation. Analysis of the AI-generated source code by a different chatbot may also help reduce problems.

The chatbots designed to support all aspects of vibe coding continue to improve, in general. Still, one needs to be realistic about their capabilities and limits. Run once and unverified use of vibe coding will continue, but developers and users should be aware of potential problems so that they can weigh the costs and risks involved.

The biggest risk often involves those unfamiliar with the underlying systems and issues to create and use vibe-coded applications without restrictions. Mandated use of AI without guidelines and guardrails is an open invitation to disaster.