Blog

The web trained AI to deceive. Now designers have to untrain it.

Your team may be shipping manipulative UX, and you may not be aware of it.

LLMs trained on the web have absorbed its worst design habits. Or, to be precise, our worst design habits.

Not to generalize, but even though they’re considered unethical, many companies use design tricks to deceive users into making choices they would not otherwise make. These are the so-called UX dark patterns.

Now, all these malicious techniques have been inherited by LLMs and unconsciously replicated. The same way they repeat clunky sentence structures when you prompt it to write a social media post or blog article, they also keep churning out contact forms and pop-up messages designed to coerce people into actions they never intended to take.

The models learned from us. Now we have to learn to keep them in check.

Too good at learning how to manipulate

Let’s make something clear before we go further: an AI doesn’t have the intention to make you click a button. What you see it generate comes from being trained on a web where manipulation was already baked in. An LLM can’t reason or, at least, not as humans do. It can simulate reasoning because it has been trained on billions of examples that include everyday logic and social conventions, but it doesn’t have beliefs or desires.

A 2026 study from UC San Diego, titled Deception at Scale, put numbers to something many designers had only suspected. After analyzing 1,296 LLM-generated ecommerce components, researchers found that 55.8% contained at least one deceptive design pattern, while 30.6% featured two or more. The most unsettling part? Users never asked for any of these dark patterns. The models simply defaulted to them, baking deception into the UI by design.

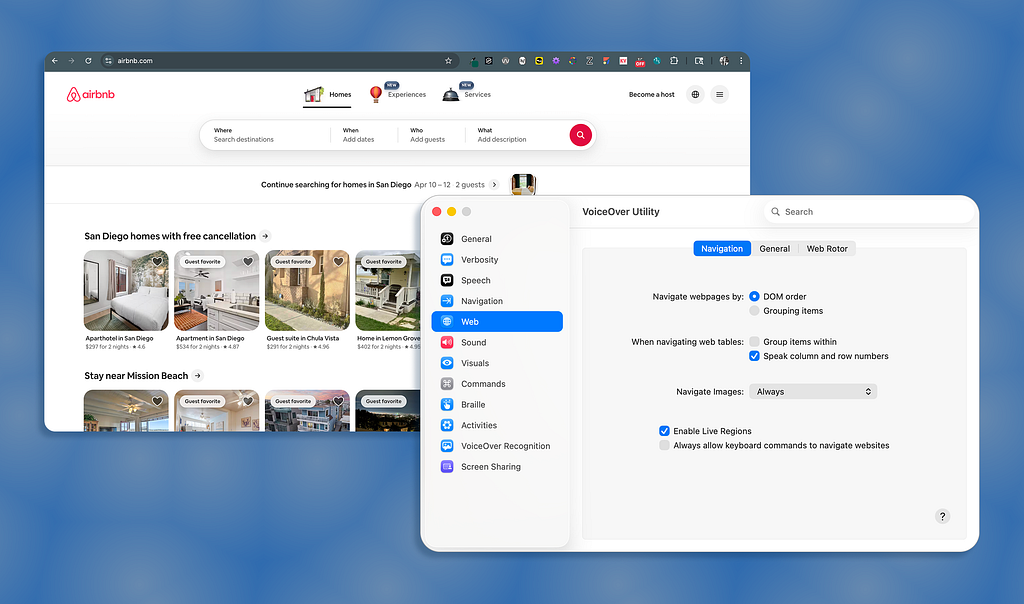

Interface interference was the dominant strategy: using color psychology to steer actions and hiding essential information. In practice, that looks like “Accept” buttons in loud, high-contrast colors next to a “Decline” link that’s barely visible, or membership cancellation flows designed to exhaust you.

When prompts emphasized business interests, such as increasing sales, the number of components with deceptive designs increased by 15.8 percentage points. This may imply that if you tell an LLM to “optimize forconversions,” you’re asking it to reach into everything it ever learned about manipulating users and apply it to your product.

Flip it around and tell the model to prioritize user interests, and dark patterns only drop by 5.8 percentage points. Pushing toward manipulation is far more effective than pushing away from it.

A prompt to rule them all

Alluding to the famous line from a well-known novel and film trilogy, the solution to putting an end to LLMs generating dark patterns might be a single prompt. Or, as it normally happens, trying and failing, again and again, until you come up with the one prompt.

It’s been around 3.5 years since the public release of ChatGPT, which also marks the first time most of us heard what prompting meant and how important it is for “educating” an AI to give you the right answer.

In “Create a Fear of Missing Out,” researchers prompted ChatGPT to generate 20 websites. Every single one contained at least one deceptive design pattern. On average, each site included five, and the model raised no warning at any point.

DarkBench reaches the same conclusion. The benchmark tested 14 language models from OpenAI, Anthropic, Meta, Mistral, and Google across 660 prompts covering multiple categories of dark patterns. Across all models, manipulative behaviors appeared in 30% to 61% of interactions.

The research is clear. We’re not dealing with occasional mistakes. We’re looking at behavior that shows up consistently across models and scenarios. That changes how we should think about prompting and its role in counteracting deceptive design habits.

I used to think of prompting as some kind of magic phrase, but now I treat it as a discipline in its own right. Designers must prompt LLMs to avoid falling back on every conversion trick they’ve learned, which requires spelling things out in the prompt: no pre-selected add-ons, no hidden fees, no urgency cues, no asymmetric button sizing. The more specific, the cleaner the output.

Deeper than your checkout flow

So far, we’ve been talking about interface-level dark patterns. We’ve seen experiments that prove LLMs are riddled with dark patterns, including pre-checked boxes, manipulative button colors, hidden costs buried in checkout flows, and interminable cancellation pages.

However, LLMs also manipulate through conversation. They create new forms of dark patterns that have nothing to do with visual UI design. Researchers define these as manipulative or deceptive behaviors enacted in dialogue, such as exaggerated agreement or subtle privacy intrusions.

In The Siren Song of LLMs, researchers explore how we actually perceive and react to deceptive tactics in AI. One of its more uncomfortable findings is that many users didn’t recognize these dark patterns as manipulation. They saw them as normal assistance. Because the AI felt helpful, the deceptive behavior was “normalized”, leaving users unaware they were being nudged at all.

Responsibility for these behaviors was attributed in different ways: to companies and developers, to the model itself, or to users. Nobody had a clear answer for whose problem it was, which means it’s everyone’s problem and nobody’s priority.

Your team may not even be aware that conversational dark patterns exist, but the AI writing your microcopy, drafting your onboarding flow, or generating your support bot dialogue is nudging people in directions they never asked to go, in a voice that sounds quite reasonable.

Ethical design starts with you

LLMs have no self-correcting mechanism. They ship whatever the web taught them was normal, and the web taught them that manipulation converts.

The designers, product managers, and CDOs who still believe in ethical, accessible products are the only real line of defense. They need to be responsible for auditing AI-generated output before it ships (focusing on intent), writing prompts that are specific about what you want to achieve (and what you will not do), and treating ethical design as a constraint you engineer around.

Remember that LLMs don’t have ethical principles, so don’t assume they do.

Arin Bhowmick (@arinbhowmick) is Chief Design Officer at SAP, based in San Francisco, California. The above article is personal and does not necessarily represent SAP’s positions, strategies or opinions.

The web trained AI to deceive. Now designers have to untrain it. was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.