Blog

The chat box isn’t a UI paradigm. It’s what shipped.

Chat is the AI interface that shipped fastest, not the one that worked. The 2024 retrofits prove it.

I wrote this essay from firsthand experience building AI-adjacent products. I used Claude Code (an AI coding assistant) for structural feedback and copy editing on the draft. The arguments, citations, and conclusions are my own.

Disclosure: I build browser extensions that wrap around major AI chat products. That means I see the usability friction firsthand, and I have a commercial interest in a world where chat is not the only AI surface. I disclose both so readers can factor those biases into what follows.

Open any AI product launched in the last three years. Ignore the model, the logo, the branding. You will find the same interface: a text input at the bottom of the screen, a send button, and a scrollback of alternating messages.

This is not a random convergence. It is the interface that fell out of what large language models could do on day one: pattern-match on text. In 2022 we had a new capability and no time to design around it, so we shipped what was fastest to build and called it conversational AI. Three years later, the fastest thing to build has become the thing everyone builds. That is how defaults calcify.

1. The chat box is what shipped, not what works

The chat box became the default AI interface because language models produce text and text boxes accept text. The decision was about build speed, not user outcomes. A blank text field gives users no clue what the system can do, no structured place to express constraints, and no feedback about whether their request fell within the system’s competence. Before LLMs we would have treated that as a design failure.

Amelia Wattenberger made this case early. In Why Chatbots Are Not the Future of Interfaces (2023), she argued that a text field gives users “unclear affordances”: the same rectangle might be a search box, a credit card field, or a chatbot, and the user is left to guess. She also flagged the flow-state cost. Users alternate between implementation (typing) and evaluation (reading), interrupting the focus state that makes creative work possible.

Don Norman named the underlying cost forty years before LLMs existed. His concepts of the gulf of execution (the gap between what the user wants and what the system lets them do) and the gulf of evaluation (the gap between what the system shows and what the user can understand) are the clearest vocabulary for why chat fails. Nielsen Norman Group has a current summary in The Two UX Gulfs: Evaluation and Execution. A chat box maximizes both gulfs at once. The user serializes intent into prose, the system returns prose, and the mapping between the two is whatever the user can infer without any help from the UI.

Three years after Wattenberger’s essay, her argument has aged well. Users are doing more work inside chat boxes than ever, and that work is getting longer, not shorter. Nielsen Norman Group’s Accordion Editing and Apple Picking research documented the pattern empirically: users rarely get what they want on the first try, so they refine through additional prompts. The word “conversation” is a euphemism for an iteration loop that the system forced on them.

2. We gave up decades of interface patterns to get here

The interface we replaced with the chat box had forty years of research behind it. Ben Shneiderman named the pattern direct manipulation in 1983: visible objects, rapid reversible actions, physical-feeling gestures. The next four decades added structured forms, progressive disclosure, contextual menus, drag-and-drop, undo stacks, autocomplete, and constraint solvers. AI products adopted almost none of it. The surface shrank to a rectangle.

Shneiderman’s central argument was about the locus of control. In a direct-manipulation interface, the user sees the object they are acting on and acts on it directly. There is no translation layer between intent and action. In a chat interface, every action goes through a compression step where the user has to serialize their intent into prose and hope the system can decompress it back into the right action. That compression is where most of the UX debt sits.

Bret Victor made the same argument about prose more than a decade before ChatGPT. His 2006 essay Magic Ink calls interaction the last resort of information software. Good design presents the relevant information as a graphical surface first, and falls back to interactivity only when information alone cannot do the job. A chat-only AI inverts this completely. There is no information surface at all until the user has interacted with it, and the interaction itself is prose. Victor’s essay reads today like a warning written ahead of time.

Maggie Appleton, a designer at Elicit who has written extensively on language-model interfaces, put the alternative bluntly in her Language Model Sketchbook. Most LLM implementations, she argues, should be “spell-check sized” and do one specific thing well. Her proposed interfaces are scoped, structured, and embedded: a highlight-to-rephrase tool, a one-click summarizer, a mouse-over explainer. None of them a chat window.

3. The industry has already admitted this

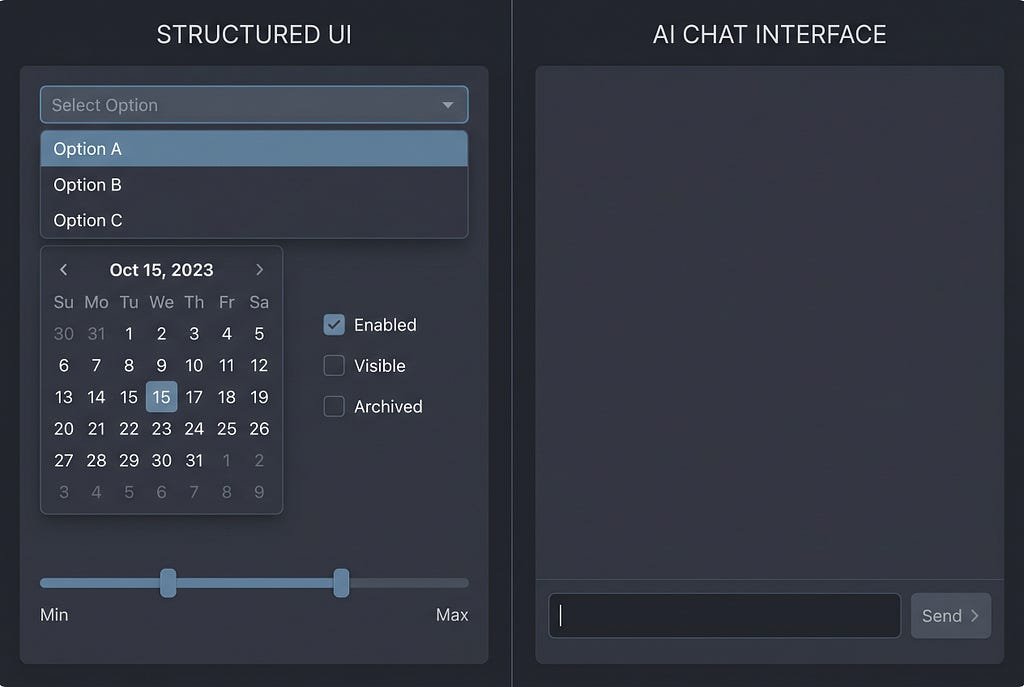

2024 is the year every major AI lab shipped GUI additions on top of the chat box. Seven retrofits in twelve months, across three labs. OpenAI opened the year with the GPT Store on January 10, a tile-based catalog that is not a conversation. In May, GPT-4o Voice broke the text-only send-and-scrollback pattern. Anthropic followed in June with Artifacts, a side panel that renders code and documents next to the chat, and Projects, a persistent workspace with file uploads and custom instructions. OpenAI returned in October with Canvas, a split-screen document editor (beta October 3, full release December 10). Anthropic shipped Computer Use on October 22, a mouse-and-keyboard agent that manipulates real desktop apps. Google closed the year with Deep Research on December 11, a multi-step research agent inside Gemini with a visible plan, progress panel, and editable outline. Each of these is a GUI pattern borrowed back from the interface the chat box replaced.

Calling this progress is charitable. It is the industry discovering, retrofit by retrofit, that a text box alone cannot hold a meaningful creative surface. You cannot edit a thousand-line document by asking the bot to re-output it with “line 312 changed to X”. You cannot iterate on a design by describing it. You cannot plan a research project without seeing the plan. The moment the task has a structured output, the chat box becomes the wrong place to work, and the vendors put a canvas, a side panel, an editor, a workspace, or a planner next to it.

The pattern is admission, not innovation. Canvas, Artifacts, Projects, Computer Use, and Deep Research are what we would have built first if we had started from the user’s task rather than the model’s I/O shape. The fact that three labs arrived at seven retrofits in the same twelve months is the cleanest evidence that the original chat-only interface was under-designed for the work users actually do.

4. “Intent-based” is not the same as “chat-based”

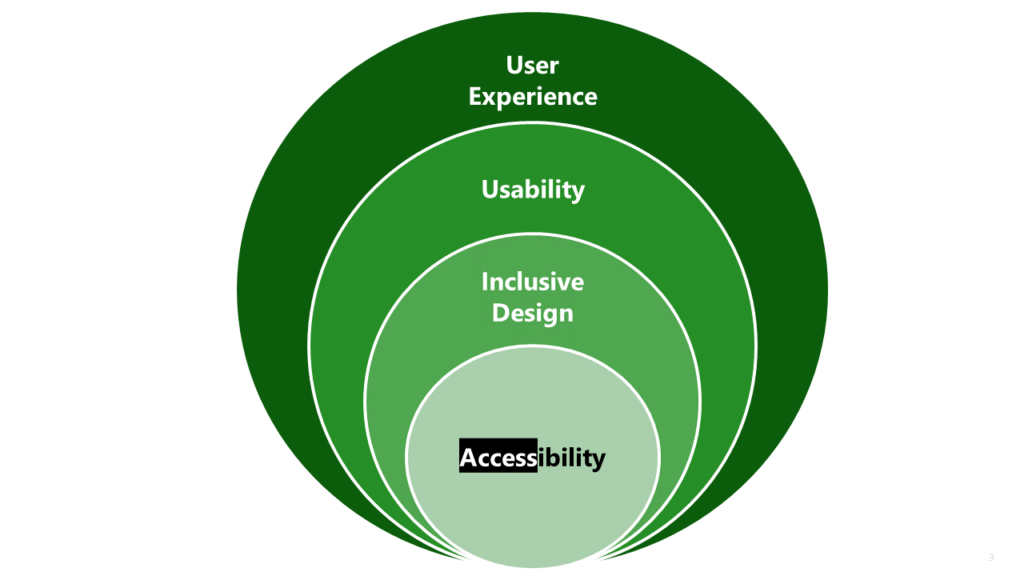

The most influential defense of chat as a UI paradigm comes from Jakob Nielsen, who argued in AI: First New UI Paradigm in 60 Years (June 2023) that AI represents the third paradigm in computing history: intent-based outcome specification. The user says what they want, not how to do it. The framing is correct and worth keeping. What is worth contesting is the quiet assumption that intent-based specification and chat-based specification are the same thing. They are not.

Expressing intent does not require prose. A date picker expresses temporal intent more precisely than any sentence. A pair of sliders expresses a tradeoff more legibly than a paragraph. A file upload expresses “work on this thing” without ambiguity. Every one of these is intent-based. None of them is chat. The chat box is one possible implementation of the paradigm, and by all accessible evidence it is a low-resolution one.

NN/G’s own follow-up research on the six types of conversations users have with generative AI supports this distinction. Different task types need different interfaces, and collapsing all six into the same text box forces users to carry the cost of discriminating between modes the UI could have distinguished for them. Intent-based is a valuable framing. Chat-only is the tax we pay for not finishing the design.

5. What post-chat AI UX looks like

Post-chat AI UX borrows from the GUI patterns the chat box displaced: visible affordances, structured input, direct manipulation of output, and scoped assistance tied to specific surfaces rather than a global text field that has to handle everything.

Alex Mohebbi made a related argument in UX Collective’s own Why Conversational Interfaces Are Taking Us Back to the Dark Ages of Usability, pointing out that the closest historical analogue to chat-only UIs is the command-line era that direct manipulation was invented to escape from. The industry already has the pattern to borrow from. It just needs to borrow it.

Two designers who write regularly on LLM UX have been pointing at the same alternative from different angles. Linus Lee’s Imagining Better Interfaces to Language Models treats language models as tools to explore latent spaces of ideas, not as chat endpoints. Users manipulate the model’s path directly through visual affordances, not by prompting for the next response. Geoffrey Litt’s Malleable Software in the Age of LLMs (March 2023) argues that LLMs can finally give end-users the ability to bend software to their specific task, but only if the interface exposes the software’s structure. A chat window hides all structure by design. A post-chat UI surfaces it.

Concrete examples of post-chat surfaces already shipping: highlight-based rephrase tools in Google Docs, inline cell autocomplete in Notion and Cursor, one-click image variants in Figma, comment-to-commit flows in Linear. None of these require the user to write a prompt. All of them are intent-based in Nielsen’s sense. Each is smaller, more specific, and more usable than a chat box. Appleton’s “spell-check sized” framing predicted all of them, and the good AI UX work of the next three years will be distributed across a thousand of those scoped surfaces rather than concentrated in one generalized text field.

Key takeaways

- Chat is the interface that shipped, not the interface that works. It became the default because LLMs produce text and text boxes accept text, not because anyone designed it.

- We gave up forty years of direct-manipulation research to get here. Shneiderman’s 1983 argument about visible objects, reversible actions, and no syntax layer still holds. Norman’s gulfs of execution and evaluation name the cost.

- Canvas, Artifacts, Projects, Computer Use, and Deep Research are not new paradigms. They are the GUI patterns the chat box removed, re-added on top twelve months apart across three labs.

- Intent-based specification is a useful framing. Chat-based specification is not the same thing and should not be conflated with it. Date pickers, sliders, and file uploads are all intent-based and usually better.

- The next three years of AI UX will be distributed across smaller, scoped, structured surfaces, not a thousand more chatbots. Linus Lee, Geoffrey Litt, and Maggie Appleton have already sketched what that looks like.

Follow me on Medium for more essays on AI UX and the design reality of building for global audiences. If you think the chat box is the worst interface we could have picked, what have you seen that does better?

About the author: Adi Leviim is a full-stack engineer and product builder with 7+ years of experience shipping commercial software to global audiences. He writes about AI UX, the design reality of building for millions of users, and the gap between AI demos and production AI. Follow him on Medium for essays at the intersection of engineering and design.

The chat box isn’t a UI paradigm. It’s what shipped. was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.