Blog

Q/A: Lumotive CTO talks software-defined optical sensing

A coming wave of robots and autonomous vehicles will need perception systems that are faster and smarter. To get there, Lumotive founder and CTO Gleb Akselrod believes programmable optics are needed to steer light to permit software-defined sensing. A bi-product of this effort will be fewer bottlenecks getting in the way of today’s autonomous systems.

Fierce caught up with Akselrod to explain his vision, which he’ll share in a session at Sensors Converge in May entitled, “Unlocking the vision AI machines need to transform industries.”

Fierce: Hi Gleb, can you offer quick description about your session at Sensors Converge on “unlocking the vision AI machines need…”?

Akselrod: AI systems are only as good as the data they receive. Today’s robots and autonomous machines often rely on static, fixed-field sensors that treat every part of a scene the same way. But real-world environments are dynamic — some areas require long-range detection, others need high-resolution near-field awareness, and priorities change moment to moment.

“Unlocking the vision AI machines need” means giving machines adaptive, software-defined 3D perception. By using programmable optics to electronically steer and shape light, we enable sensors to focus exactly where and when it matters. This allows robots and intelligent systems to gather the right data at the right time — improving safety, efficiency, and decision-making.

Fierce: What’s the dilemma that robots and other machines face when making decisions? Is the data not coming fast enough as you imply, and what does that mean?

Akselrod: The core dilemma is not simply speed — it’s relevance and efficiency.

Most sensing systems collect massive amounts of uniform data across a fixed field of view, regardless of what’s actually important in the scene. That creates two challenges:

- Critical regions may not receive enough attention (for example, a fast-moving object entering the path of a robot).

- Processing resources are wasted on areas that don’t require detailed updates.

In fast-moving industrial environments, milliseconds matter. If perception systems can’t adapt dynamically — increasing frame rate in one region while reducing it in another — decision-making becomes slower, less precise, or more power-hungry.

The real issue isn’t just that data isn’t coming fast enough — it’s that it isn’t coming intelligently enough.

Fierce: What is meant by programmable optics and electronically steering light?

Akselrod: Programmable optics refers to the ability to control how light is directed, shaped, and distributed using software.

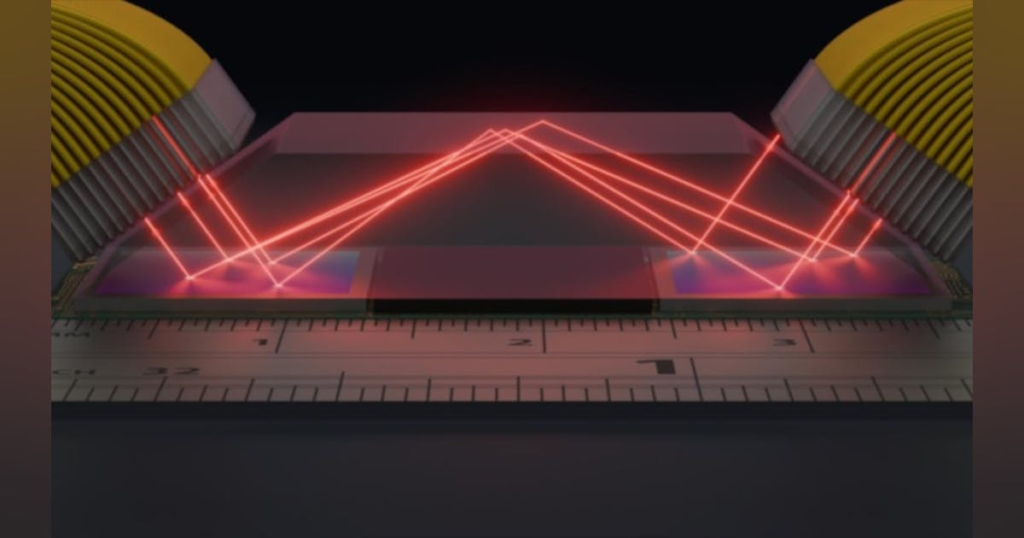

Traditionally, LiDAR and optical systems rely on mechanical movement or fixed optical components to scan a scene. With programmable optics, light steering happens electronically — without moving parts. A solid-state optical chip can dynamically adjust beam direction, field of view, scan resolution, and region-of-interest selection in real time.

Electronically steering light means we can direct laser beams precisely to specific areas in a scene through digital control — similar to how a processor routes electrical signals, but in this case we’re routing photons. This allows perception systems to adapt instantly to changing environments.

Fierce: Can you offer a couple examples of products or processes that are helping set a new standard for intelligent sensing?

Akselrod: We’re seeing strong momentum in industrial robotics, warehouse automation, and smart infrastructure.

One strong example is autonomous mobile robots operating in warehouses. These systems must simultaneously monitor the floor for obstacles, track pallets at mid-range, and detect people or forklifts at longer distances. Traditionally, that requires multiple sensors or compromises in coverage. Software-defined sensing allows a single system to dynamically manage multiple zones, allocating resolution and frame rate where it’s needed most.

Another important example is the concept of a “safety cocoon” around heavy equipment and autonomous trucks. In mining, construction, ports, and logistics yards, large vehicles operate in complex environments where human workers, other vehicles, and infrastructure are constantly moving. A safety cocoon requires continuous 360-degree awareness, high reliability, and the ability to prioritize fast-moving or high-risk zones in real time.

Programmable sensing allows operators to adapt coverage dynamically — for example, increasing update rates in blind-spot areas, extending range in the direction of travel, or focusing on pedestrian-heavy zones. This kind of adaptive perception is critical for improving safety outcomes while reducing the need for stacking multiple fixed sensors around a vehicle.

In both cases, the move toward software-defined, solid-state optical platforms is helping reduce hardware complexity while increasing intelligence, flexibility, and real-world performance.

Editor’s Note: Gleb Akselrod’s session on unlocking vision for AI machines will be held at 4 pm PT on Wednesday, May 6, at Sensors Converge in Santa Clara, CA. Go online to register and find more information.