Blog

A fork in the road for sensor fusion in automotive design

From Left: Matt Pollock, Sean Murphy, Caroline Hayes, Ahsan Qamar

This was explored in a panel discussion at Microelectronics US, moderated by Electronics Weekly’s editor, Caroline Hayes. Matt Pollock, seior industry specialist solution architect at AWS, confirmed the proliferation of ML. “The industry [focus] has been around the LLMs, and what we can do with those language models, however, we’re seeing a lot of the more traditional ML type workflows, for example, perception algorithms. Camera data, that’s going to recognise a pedestrian, a chicken, a bicycle, doesn’t generally require an LLM to function. Similarly, if we’re doing data analytics and identifying transit patterns within data on the vehicles, that’s going to be more on the ML side. And I see more of the interactive transformer or modifying text or speech or human interactions, more on the element side,” he said.

Function algorithms

Ahsan Qamar, senior manager systems engineering and test, Ford, added tha ML algorithms change because perception or autonomous driving function algorithms were based on creating deterministic control systems. “So essentially, there was this area about how you take these machine learning algorithm and qualify them for safety. Some areas where this has made rapid progress is speech-to-text or speech connection with edge device.”

Sean Murphy, director of product for CPU IP at MIPS, believes that latency will drive the increased use of AI. “ADAS has been around a while now, but is certainly new from an application point of view. The only thing I’d add from a compute perspective is this is not GW of power of training, this is not average throughput-based, this is now realtime latency-based. This is compute at the edge. You can’t rely on the cloud to determine where a pedestrian is coming from and whether you need to stop driving; you have to do that on vehicle compute. Latency means distance, so that can [determines] stopping distance. These are all completely different problems: you’re talking worst case execution times of less than 50ms.

Reducing latency will result from improved compute performance in vehicles, said Pollock. “With some of the latest silicon, the amount of compute power that can go on four wheels is massively larger than it has been 5-10 years ago. For example, the Nvidia-oriented Thor is about seven and a half times performance increase for about double the cost.” Better silicon unlocks a lot of possibilities in terms of running more complex models and getting the performance higher, he added. Another element to improved performance is going to be “ruthlessly optimising the algorithms themselves for maximum performance and minimal extra computation,” he said.

Qamar also emphasised the importance of algorithm design for latency. Just as a driver makes some decisions quickly, for example whether to slow down or acclerate, others can be made at a slower rate. For this, a vehicle will need a very fast response control system with a separation of a local reflex, which has to be fast, whereas a central reserve can be slower, but able to make more cognitive, high level policy decisions. “As a result, the compute that these two type of algorithms require is also slightly different, because one is hard, real-time, micro- recovery-based, and the other one is more associated.”

RISC-V

Murphy added that RISC-V is going to play a part in AI components. He sees RISC-V CPUs, with a vector engine, offering scalability and software portability, offering different models for different needs; there will be slow and fast models, he said. “Changing the vector elements, changing matrix multiple sizes, sizing the hardware for the model, and what the problem is you’re trying to solve, but also maintaining some amount of software compatibility across that same hardware is really going to lock in a lot of benefits,” he said.

“From a portfolio perspective, or from a compute perspective, you have to have the range from super-heavy, real-time actuation all the way up to [components] that keep learning. . . You will have solutions for each stage of the physical AI spectrum where you’re sensing, thinking, acting and communicating,” he added.

Sensor fusion

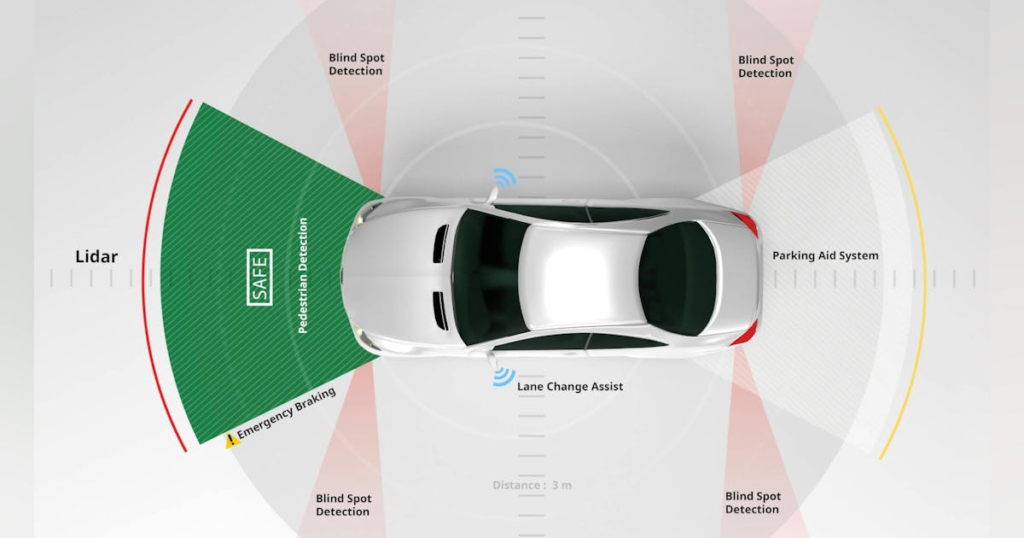

Naturally, Pollock focuses on augmenting physical data sets for perception functions. This is acquired by cameras, lidar and radar, with synthesis on top to explore more of the rarer events or the rarer scenarios. He cited the exmaple of inserting a chicken crossing the road into a scene where you might not normally have one, “to see what’s going to happen with that perception algorithm and to take a recording of what occurred under good weather conditions, and later on, what happens in fog, what happens in rain, what happens in Texas hail. These are things that you might not normally be able to plan for in a testing campaign, but are going to be critical to the operation of the vehicle and the features in the vehicle”.

Qamar added: “You also have to think about what kind of sensor loopholes you have and look at them from a vertical perspective. One example is that you classify or detect an object – that comes under ISO 26262, but you may also have to address safety mechanisms or redundant sensing. There is a lot of debate about redundant sensing, in terms of machine learning model stem cells, and how they take the sensor fusion part, and how they take the disturbance from one sensor, and then it can increase the overall disturbance of the fusion sensor.” As well as the ML pipeline for this, using a deterministic discrete controller, there is also an end-to-end pipeline, he explained, where data is trained not in terms of classifying the object, but what the reaction needs to be for that object. “I think there are multiple prototypes for those available, and each has pros and cons,” he admitted.

Murphy identified another trend in sensor fusion. “You have to pick the right sensor for the right job,” he said. The first catergory is camera sensors. Companies like Tesla are placing a lot of compute on the vehicle’s camera, juding distance, for example rather than using a different sensor for this task, whereas other companies are using sensor fusion, Murphy explained. Benefits are that cameras are relatively inexpensive and provide good semantic data (i.e., reading stop signs, traffic signals) but find judging distance very compute-heavy. Lidar sensors are expensive but provide very good resolution, especially with regards to imaging accuracy of a scene. Radar sensors are probably the most weather-robust, he continued, but cannot match the accuracy of lidar. If imaging radar progresses and costs come down, they may replace lidar altogther he suggested.

“Weather is the big hurdle in all these systems,” Murphy continued. They work well in bright light with clear skies but cameras do not work as well in dark conditions and lidar does not work well in rain and fog. “One of the easy initial steps is starting these models starting to weight the right sensor for the given weather condition,” he proposed. In other words, assuming radar is corect and weighting perception more towards radar even if the camera is saying there is nothing to see. This creates a lot of interesting test issues, and – whether that’s hardware software – I think that is one of the main challenges facing ADAS,” he said.

Qamar agreed that minimising fusion error is going to be a challenge for testing. He referred to research papers around the increasing disturbance of the fusion, and exponentially increasing it.the challenge is performing synthetic data and scene perception to run in some virtual environment, while trying to interactively introduce those disturbance as physical disturbances, and then seeing their impact will require a lot of work and, to date, the prototypes are not running accurately.

Pollock is more optimistic. “My view is that the integration problem sensor fusion, if solved properly, gives a better ceiling to performance than what you’re going to get just from cameras alone, because of the different modalities of the sensors themselves. It’s going to take a lot of virtualised testing to be able to run test cases in parallel, tp crunch those scenarios and get as much coverage as possible to identify those edge cases. It’s a ton of work, but in my opinion, that’s the higher value outcome that has a better ceiling on the performance,” he said.

Destination Austin – Microelectronics US focuses on industry supply chain